Update: A free tool is available that does all this for you in a GUI: Loopback Check configuration Tool released – free download

Hi dear friends!

401.1 Access denied…

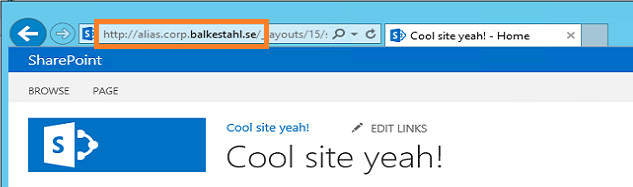

If you try to access your newly created web application with a real nice FQDN or NetBIOS name and you end up getting a 401.1 Access denied…

Even after adding the site to the local intranet zone in IE…

Even after beeing prompted 3 times and filling in the correct credentials…

After setting up your Search to crawl you sites in a small farm whith crawl and web services on the same server…

You check and doublecheck your credentials, you add yourself as the farm admin, you try logging on with the farm account, but nothing…still 401.1…

I know this has been written about many times Before, but some things seem to still be missing…

Now everyone seems comfortable with the sparse description on how to ‘add hosts to the list’ which is pretty much what you do when configuring the loopback check the ‘secure way’. You can also disable the loopbackcheck completely, but why if there is no real reason. Read Spencer Harbars excellent post on the topic if you need explaining why this is so. It is a few years but it is still the truth!

The KB article 896861 for this is an old one and the title does not really tell you that this is the one you are looking for, ‘type the host name or the host names for the sites that are on the local computer, and then click OK.’ is not crystal…

What you need to do is this step by step:

In ‘Metro’ mode, type regedit

Regedit will most likely be the only result, hit enter

In regedit, find the following registry key: HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Lsa\MSV1_0

First…

then…

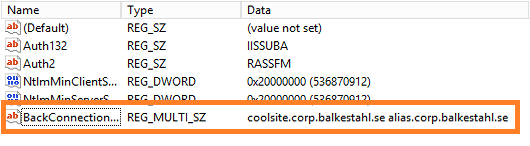

Now, create a Multi-String Value under the MSV1_0 key.

Type in the name of the new Multi-String value: ‘BackConnectionHostNames’, Hit Enter.

Right click on the value BackConnectionHostNames and coose Modify.

Add the URL you want to be able to access from a local browser on the server.

Don’t know why, but I seem to Always get this. Click Ok.

Viola!

Adding multiple URL’s to the list of ‘trusted’ URL’s, simply make a new line between them.

That will look like this.

To be extra sure that nothing else will sabotage functionality, check so that the URL’s are added to DNS.

(Or local hosts file)

Check so that the URL’s are added as bindings in IIS.

Verify that the URL’s are correct and are added to AAM.

Make sure that the URL is added to the Local Intranet Zone in Internet Explorer (if you need to browse the site from the server, NOT RECOMMENDED!).

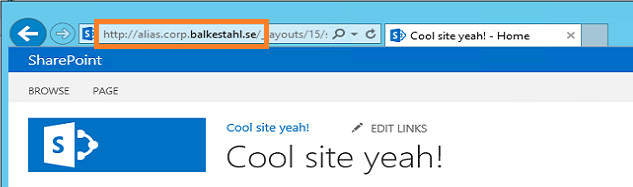

Try to access the URL in a browser.

And the other URL.

Done!

Doing the same using PowerShell

Using PowerShell to configure the Loopback check, requires two steps:

1. Add the multistring value to the registry

Get-Item -path “HKLM:\System\CurrentControlSet\Control\Lsa\MSV1_0” | new-Itemproperty -Name “BackConnectionHostNames” -Value (“coolsite.corp.balkestahl.se”, “alias.corp.balkestahl.se”) -PropertyType “MultiString”

2. Restart the IISADMIN service

Restart-Service IISADMIN

1. Add the multistring value to the registry

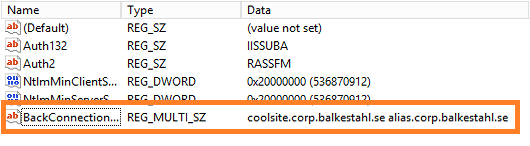

Given that you have Everything setup correctly, your AAM’s, your DNS entrys, (URL added to local intranetsites zone in IE), and so forth…you can use this single PowerShell command to exclude the URL’s for your sites from the loopbackcheck, this way, you don’t have to disable the loopbackcheck at all (Way better security).

The following command will add my two URL’s to the exclusion list, edit the values to add your own URL’s.

| Run this in a PowerShell prompt running in elevaled mode/as Administrator |

Get-Item -path “HKLM:\System\CurrentControlSet\Control\Lsa\MSV1_0” | new-Itemproperty -Name “BackConnectionHostNames” -Value (“coolsite.corp.balkestahl.se”, “alias.corp.balkestahl.se”) -PropertyType “MultiString”

Running this will if Everything is done right, show this

This is how it will look if it succeeds!

If you get ‘The property already exists.’, then you already have the ‘BackConnectionHostNames’ value added to the registry, check using registry editor to see if you can delete it or if it has other values that need to be there.

After a successful execution, check the registry to verify

2. Restart the IISADMIN service

Now you have to restart the IISADMIN service in order for it to ‘reread’ the registry values and implement our Changes.

This is easy, in a PowerShell prompt running in elevaled mode/as Administrator

Restart-Service IISADMIN

| Note the typo/bug in the text, it says stopping twice but what it does it stopping and starting |

Done!

The command line in step 1 will add two (2) entries to the list, coolsite.corp.balkestahl.se and alias.corp.balkestahl.se. If you need to add more URL’s, add them to the Values, like: -Value (“coolsite.corp.balkestahl.se”, “alias.corp.balkestahl.se”, “mycoolnetbiosname”, “extraname.corp.balkestahl.se”).

| Make sure that the doublequotes are formated in the proper way if you copy from this post! |

That would make the command

Get-Item -path “HKLM:\System\CurrentControlSet\Control\Lsa\MSV1_0” | new-Itemproperty -Name “BackConnectionHostNames” -Value (“coolsite.corp.balkestahl.se”, “alias.corp.balkestahl.se”, “mycoolnetbiosname”, “extraname.corp.balkestahl.se”) -PropertyType “MultiString”

and

Restart-Service IISADMIN -force

–

–

References:

You receive error 401.1 when you browse a Web site that uses Integrated Authentication and is hosted on IIS 5.1 or a later version

http://support.microsoft.com/kb/896861

DisableLoopbackCheck & SharePoint: What every admin and developer should know. (Spencer Harbar folks)

http://www.harbar.net/archive/2009/07/02/disableloopbackcheck-amp-sharepoint-what-every-admin-and-developer-should-know.aspx

Can’t crawl web apps you KNOW you should be able to crawl (Todd Klindt’s oldie but goodie)

http://www.toddklindt.com/blog/Lists/Posts/Post.aspx?ID=107

Thanks to:

As Always, Mattias Gutke! Now at CAG. Always a great help and second opinion!

___________________________________________________________________________________________________

Enjoy!

Regards

Twitter | Technet Profile | LinkedIn

![]()